Graph Neural Networks (GNNs) have become a prevailing tool for learning physical dynamics. However, they still encounter several challenges: 1) Physical laws abide by symmetry, which is a vital inductive bias accounting for model generalization and should be incorporated into the model design. Existing simulators either consider insufficient symmetry, or enforce excessive equivariance in practice when symmetry is partially broken by gravity. 2) Objects in the physical world possess diverse shapes, sizes, and properties, which should be appropriately processed by the model. To tackle these difficulties, we propose a novel backbone, Subequivariant Graph Neural Network, which 1) relaxes equivariance to subequivariance by considering external fields like gravity, where the universal approximation ability holds theoretically; 2) introduces a new subequivariant object-aware message passing for learning physical interactions between multiple objects of various shapes in the particle-based representation; 3) operates in a hierarchical fashion, allowing for modeling long-range and complex interactions. Our model achieves on average over 3% enhancement in contact prediction accuracy across 8 scenarios on Physion and 2X lower rollout MSE on RigidFall compared with state-of-the-art GNN simulators, while exhibiting strong generalization and data efficiency.

SGNN Architecture

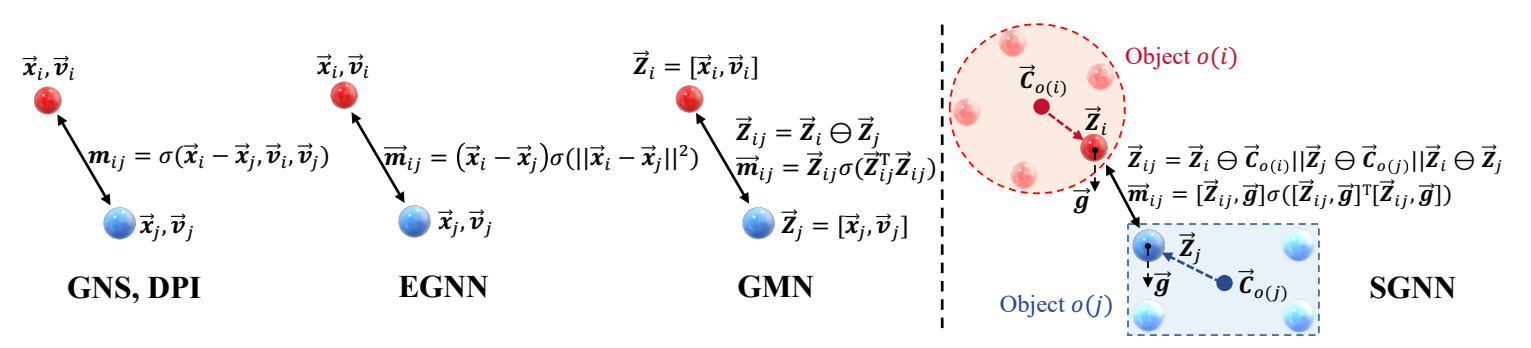

SOMP. In the first place, our Subequivariant Graph Neural Network (SGNN) is equipped with the Subequivariant Object-aware Message Passing (SOMP) mechanism depicted in the figure below. SOMP is able to characterize the rich geometric information exchanging between two interacting objects while preserving the desirable symmetry, e.g., the rotational equivariance restricted along the gravity axis. Compared with the existing Graph Network simulators [Sanchez-Gonzalez et al., 2020; Li et al., 2018; Satorras et al., 2021], SOMP better simulates the dynamics that well abides by the physical prior, thus achieving strong generalization in different evaluation scenarios. We also theoretically reveal that SOMP can universally approximate any subequivariant function, whose equivariance is broken by external force fields.

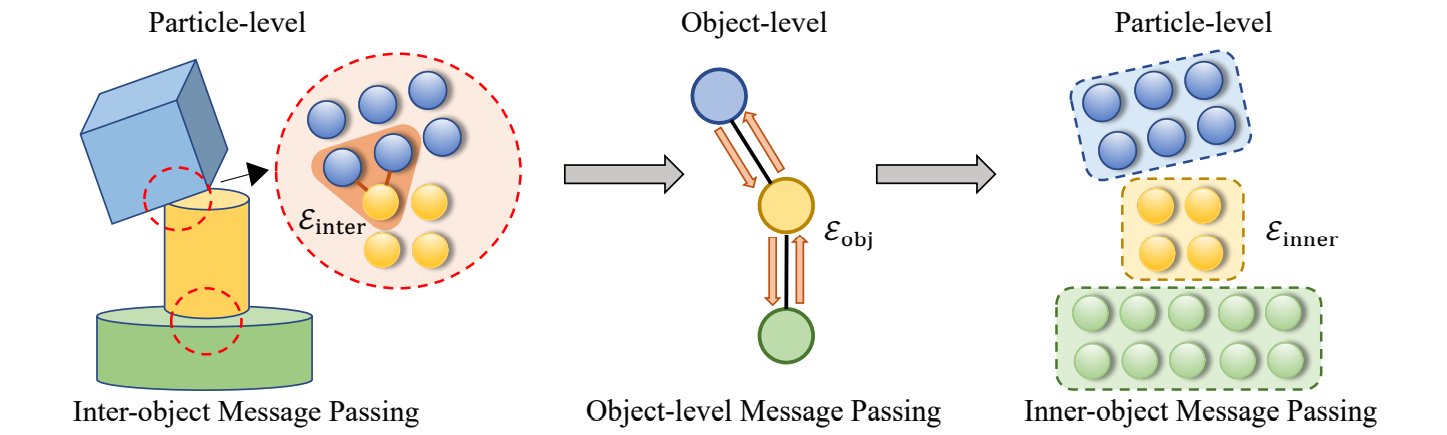

Overall architecture. Many physical scenes are complicated, possibly involving contact, collision, and friction amongst multiple objects. In light of this, we further incorporate our message passing into a multi-stage hierarchical modeling framework. One of our interesting findings here is the edge separation. This is indeed motivated by the consideration that the interactions between or within objects are usually different, and it would be beneficial to disentangle these two kinds of interactions in message passing. The overall flowchart is provided in the figure below. We alternate between three stages that implement the above-mentioned SOMP distinctly.

The Performance on Dynamics Simulation

We benchmark SGNN on Physion [Bear et al., 2021], a large-scale challeging dataset, as well as RigidFall [Li et al., 2020]. We demonstrate the superiority of SGNN on dynamics prediction tasks in terms of predicting high-fidelity trajectories in a highly data-efficient manner. We display some qualitative comparisons between SGNN and the baselines in the following animation. Please refer to our paper for the comprehensive quantitative results as well as ablation studies.

Generalization Towards Unseen Testing Data

We further evaluate the generalization capability of SGNN towards unseen test-time modifications of the data, in order to verify that our design does equip SGNN with the desirable physical inductive bias. The experiments are conducted in three aspects.

1. Random rotation along the gravity axis. Since our SGNN satisfies subequivariance, it naturally well generalizes to test data that are rotated along the gravity axis, even when trained on data with directional bias. Among the baselines, GNS [Sanchez-Gonzalez et al., 2020] and DPI [Li et al., 2018] do not explicitly satisfy equivariance, while EGNN [Satorras et al., 2021], as a E(n)-equivariant model, exerts a too strong equivariance constraint that fails to take into account the broken symmetry along the gravity axis. Our SGNN, with the help of SOMP, relaxes the constraint and is able to bridge the gap between training and testing data.

2. Random rotation along a non-gravity axis. Apart from rotating along the gravity axis, it is also interesting to see whether breaking equivariance, as characterized by our subequivariance, can indeed help the model correctly learn the effect of gravity. One strong evidence for this criteria is to see how the predicted trajectory will be if we apply a test-time rotation along a non-gravity axis. In the animation below, we surprisingly find that SGNN is able to predict the dynamics driven by the gravity even when trained only on the horizontally placed scenarios. This observation verifies the efficacy of our proposed equivariance relaxation approach.

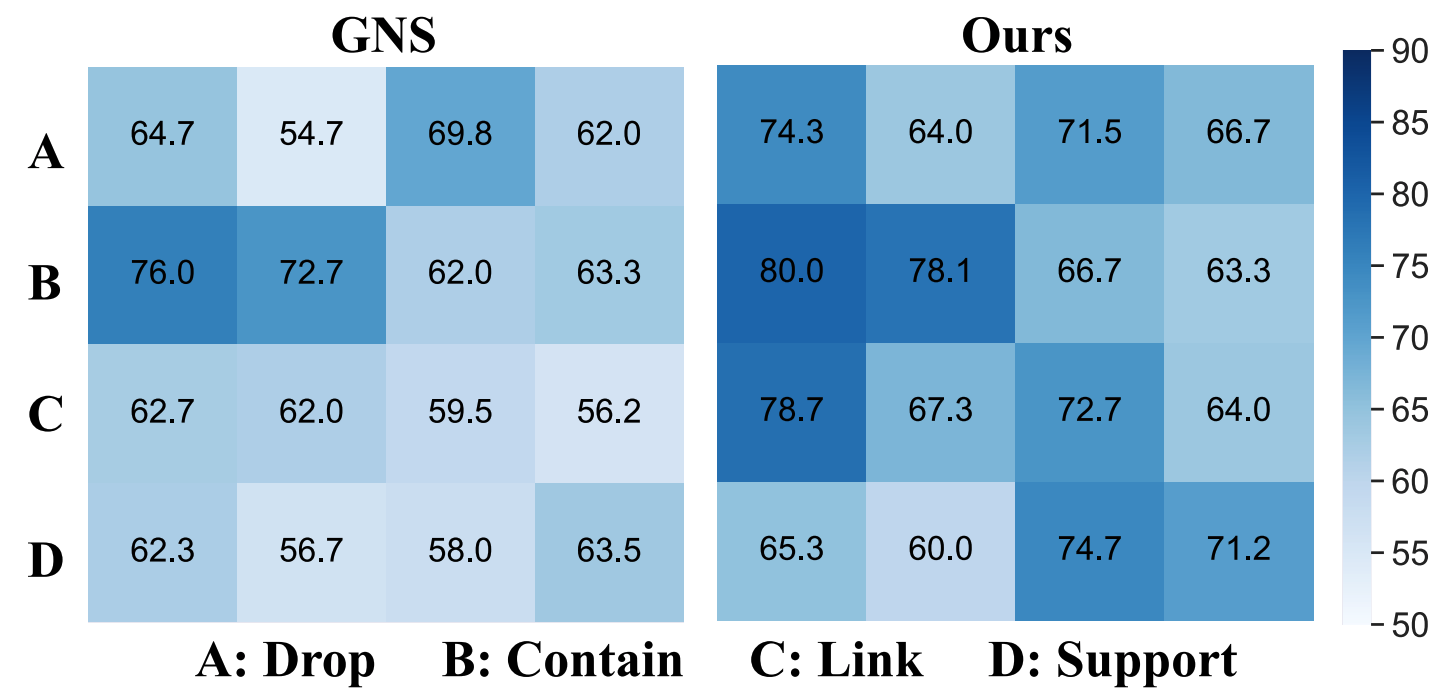

3. Generalization across different scenarios. We further assess the cross-scenario generalization of SGNN and compare it with GNS on Physion dataset. The heatmap above depicts the contact accuracy when the model is trained on scenarios indexed by rows and tested on those indexed by columns. SGNN performs promisingly, outperforming the baseline by a large margin.